Y’all. Let me tell you about the morning I woke up to a series of emails from OpenAI that were not the good kind.

Overnight, while I was asleep, someone had been running up my API key. OpenAI kept emailing me saying the account was being topped up. Over and over. By the time I saw the emails, the damage was done, about $20 worth of API usage, which translates to millions of tokens. Somebody was having themselves a very productive night on my dime.

Here’s how it happened, and more importantly, here’s what you need to know if you are building anything with AI APIs right now.

The Backstory

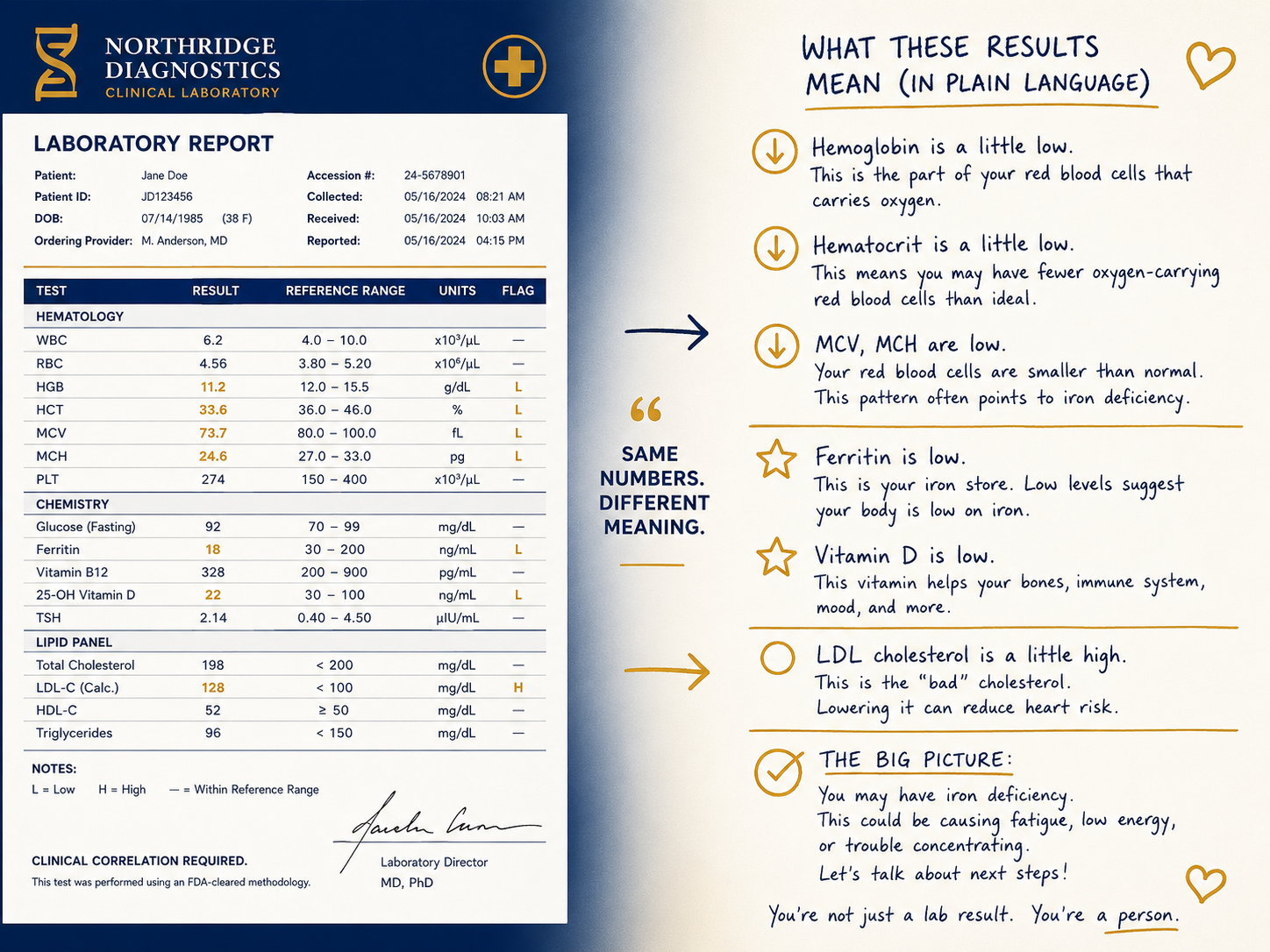

A couple of years ago I vibe coded an app called Lab Test Insights. You can find it at labtestsandcites.com. It’s a tool where you can ask questions about lab results. If your bloodwork comes back with an abnormal value and you’re not sure what it means, you can go there and get a plain-language explanation plus a question to bring to your doctor. I was proud of it. It worked. I moved on.

A few months ago I got an email from a stranger to my business inbox. The message said something like: “Hey, your API key is exposed. I can help you fix it.”

I thought it looked sketchy. I deleted it and kept moving.

Reader, it was not sketchy.

What Actually Happened

I built Lab Test Insights in Lovable, which is one of those AI-powered vibe coding platforms that lets you build functional apps fast without being a trained software engineer. It is great for getting things off the ground quickly. What it is not great at — or at least what it did not do for me in this case — is locking down security by default.

Here’s the technical piece that matters: the app was built with Vite and React. In Vite, any environment variable that starts with VITE_ gets bundled directly into your frontend code. That means it gets shipped to the browser. Which means anyone who opens DevTools and does a quick inspect can see it sitting right there.

My OpenAI API key was living in the browser bundle like it had no shame whatsoever.

Someone found it, grabbed it, and either used it themselves or sold it. Based on the volume of usage overnight, I’m leaning toward it being sold on the dark web. Millions of tokens of usage in a few hours is not a casual user, that’s someone who bought a working API key and immediately got to work.

The $20 Lesson

Here is the part where I have to give myself a tiny bit of credit: I had set a billing threshold on my OpenAI account. I cannot spend more than $20 in a month without hitting a limit. That ceiling is the only reason this story ends at $20 and not at $200 or $2,000.

That billing threshold was not intentional security planning on my part, honestly. I set it because I am budget-conscious. But it turned out to be the thing that kept a bad situation from getting significantly worse.

Lessons Learned

1. Don’t ignore emails about security vulnerabilities…even ones that look sketchy.

If a stranger emails you saying your API key is exposed, take 10 minutes to verify it before you delete it. I wish I had. The email I received was legitimate and I ignored it for months.

2. VITE_ means public.

If you are building with Vite or any frontend framework and you have a secret, an API key, a token, anything that connects to a paid service…it does not belong in a VITE_ environment variable. Full stop. Anything prefixed with VITE_ will be visible in the browser.

3. API calls that involve secrets belong on the backend.

The fix for my app was moving the OpenAI API calls to a serverless function on Netlify. The key now lives server-side and never touches the browser. It took about an hour to implement and I should have done it from the beginning.

4. Set billing thresholds on every API account you have.

Go do this right now if you haven’t. OpenAI, Anthropic, Google, whatever you’re using. Set a monthly limit that will stop runaway usage before it becomes a real financial problem. This one saved me.

5. Rotate your API keys after any suspected exposure.

As soon as I realized what had happened, I revoked the exposed key and generated a new one. If there is any chance your key has been seen, treat it as compromised and replace it immediately.

The Vibe Coding Reality Check

I want to be honest about something because I think it matters.

Vibe coding is genuinely powerful. I have built things that work, that help people, and that I am proud of, and I could not have built them as fast without these tools. But there is a gap between “this app works” and “this app is secure,” and most vibe coding platforms are optimized for the first thing, not the second.

That is not a reason to stop building. It is a reason to build with intention.

Before You Deploy Anything: Get a Security Review

This is the recommendation I wish someone had given me before I launched Lab Test Insights.

Before you take any vibe-coded app live, especially one that uses an API with billing attached — run the codebase through a coding agent specifically for security vulnerabilities. Claude Code and OpenAI Codex are both solid options for this. You can literally say: “Review this codebase for security vulnerabilities, with particular attention to exposed credentials, insecure API calls, and frontend data leakage.” It takes minutes and it will catch things that the vibe coding platform didn’t.

You built the thing. Spend 20 more minutes having an AI check it before you put it in front of the world.

Vibe Coding Best Practices (The Short List)

- Never put API keys or secrets in frontend environment variables

- Move all API calls involving credentials to a backend or serverless function

- Set billing thresholds on every pay-per-use API account

- Run your codebase through Claude Code or Codex for a security review before deploying

- Don’t ignore emails about exposed credentials, even if they look suspicious

- Rotate keys immediately after any suspected exposure

- Check your API usage dashboards periodically — unusual spikes are a red flag

The Bottom Line

This was a $20 lesson. I got off easy. Someone else might not.

If you are building with AI APIs and you have not thought carefully about where your keys live and who can see them, this is your sign. Go check. Right now. I’ll wait.

And if you want to follow along as I keep building, and keep making mistakes in public so you don’t have to, Lab Notes is where I document all of it.

See you next week.

Meredith

Meredith Hurston is a healthcare quality professional, doctoral student in AI and machine learning, and author of Algorithmic Oversight. Lab Notes publishes weekly at meredithhurston.com