Healthcare Enterprise Isn’t Ready for Agentic AI. And the Governance Problem Is Harder Than It Looks.

I spend a meaningful chunk of my personal life working with agentic AI. On my personal laptop, I use it daily for research synthesis, writing, and managing the kind of complex multi-step work that comes with being a doctoral student and a working professional at the same time. It reads documents, drafts content, runs tasks in sequence, and works directly within my file system. It has genuinely changed how I work.

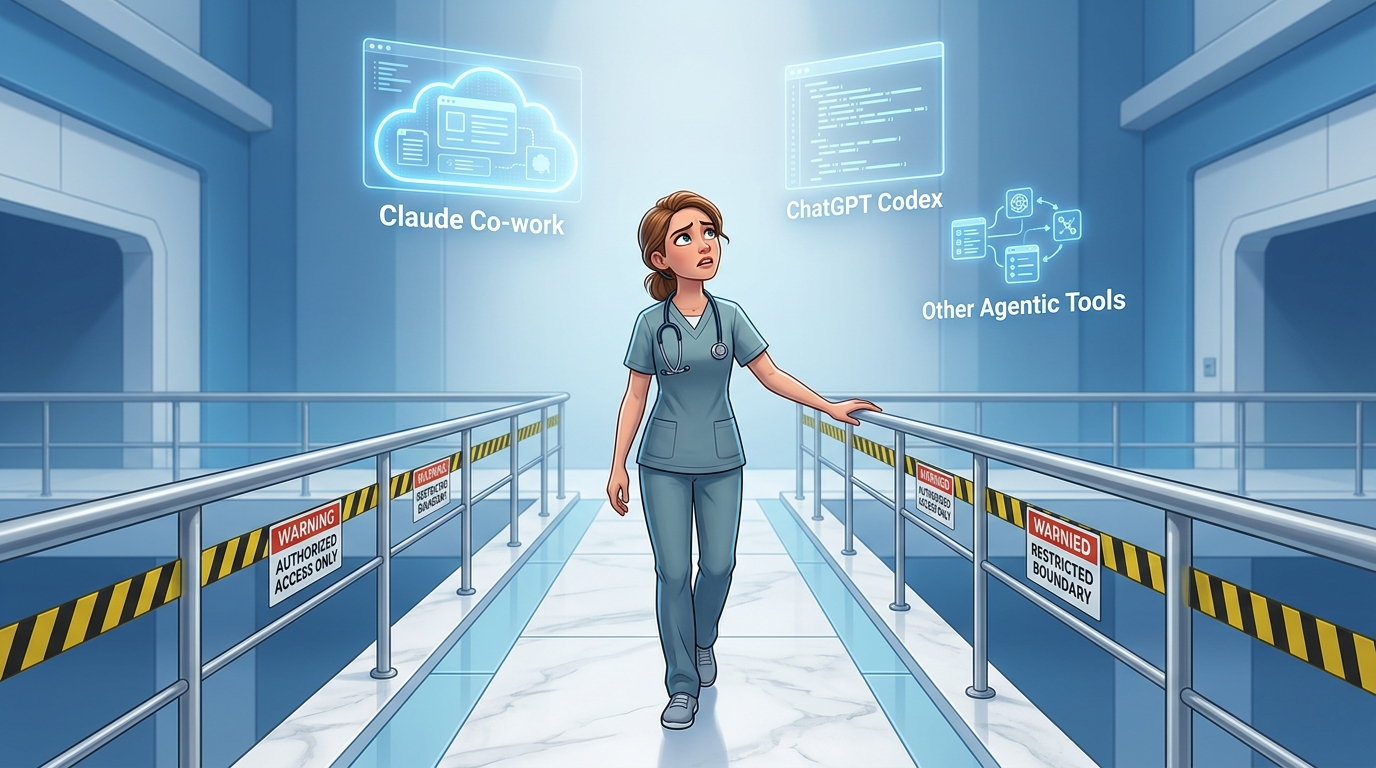

At my day job, I use something very different.

That gap is what this post is about.

What “Agentic AI” Actually Means

Most people still think of AI as a chatbot. You type something in, it generates a response, and you copy it somewhere useful. That mental model made sense for 2023. It is increasingly out of date.

Agentic AI is a different category of tool. It does not just answer questions. It takes actions. It can read files on your system, write and save documents, execute multi-step workflows, and move between applications without you manually triggering each step. You give it a goal and it figures out the sequence of steps needed to get there.

The tools that do this are getting very good, very fast. And they are not available to most healthcare workers in any meaningful way at their jobs.

What Approved AI Actually Looks Like Right Now

Let me be concrete, because I think this conversation stays too abstract most of the time.

At my workplace, I have access to two sanctioned AI tools. One is a paid Microsoft Copilot license, which integrates with Office 365. It can help draft documents, suggest Excel formulas, and assist with content inside the Microsoft environment. That is genuinely useful. I appreciate having it.

The other is a proprietary AI tool built specifically for our organization. It is HIPAA-compliant, which matters enormously in a healthcare setting, and it can do a reasonable job generating content when you give it the right prompts. It knows our context. It takes privacy seriously.

Here is what it cannot do: create a file.

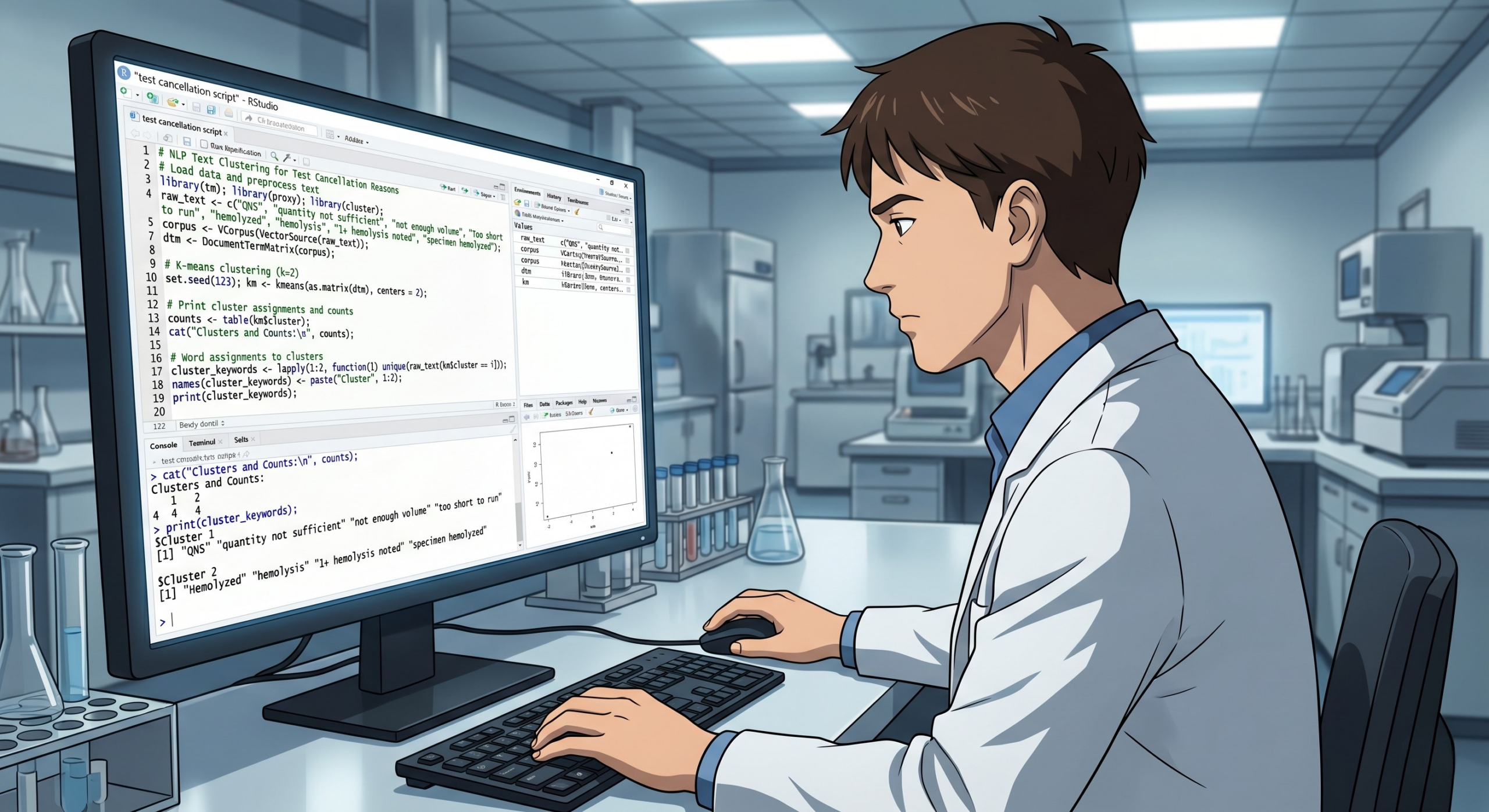

If I ask it to draft a table, I get text I have to copy and paste into Excel. If I ask it to summarize something in a format I can share, I copy and paste it into Word. If I generate code, I copy and paste it into VS Code or a Jupyter notebook. Every output is an island. Getting anything into a working document is a manual step that I have to take every single time.

That limitation is not a bug. It is a design choice. And understanding why it was made is actually the key to understanding the whole governance problem.

The Governance Logic Is Not Wrong

When an AI tool can write directly to a file system, it can write to the wrong place.

Think about what that means in a healthcare setting specifically. Patient data lives on clinical workstations. Shared drives hold protected health information. Documents get synced to cloud storage automatically. One AI agent that has been granted file access, operating in an environment where the user does not fully understand what file access means, can potentially write data somewhere it absolutely should not go. And not out of malice. Out of mundane automation doing exactly what it was told.

The organizations making these governance decisions are not being paranoid. They are looking at a real risk surface: a powerful tool, a large and varied workforce, a regulatory environment with no tolerance for PHI exposure, and a user population that ranges from deeply tech-savvy to completely unfamiliar with how these systems actually work. That is a hard combination to govern. Restricting the tool to outputs that require a human to manually move them somewhere is a conservative choice, but it is a defensible one.

I am not going to pretend I do not find the copy-paste workflow frustrating. I do. But I understand why it exists.

The Gap That Actually Needs Attention

Here is what I think gets missed in this conversation.

The governance frameworks being built right now are largely being designed without meaningful input from the people who will live inside them. IT and compliance teams are making decisions about what AI can and cannot do at the enterprise level, and those decisions are reasonable on their face, but they are being made without a clear picture of what a frontline professional is actually trying to accomplish in a given workday.

The result is tools that are safe but hobbled in ways that reduce their usefulness to near zero for certain workflows. And when approved tools are frustrating enough, people find workarounds. I wrote about that directly in Issue 3, when I covered shadow AI in the clinical lab. Burnout and staffing pressures push people toward whatever works, whether it has been sanctioned or not. Conservative governance that does not account for actual user needs does not eliminate that behavior. It just drives it underground.

Agentic AI is coming into healthcare environments whether enterprise governance is ready or not. The 2026 clinical lab trend reports are unambiguous about this: AI that recommends actions is already in labs. AI that takes actions, placing reflex orders, adjusting staffing queues, flagging turnaround time breaches and routing specimens accordingly, is on its way. The governance gap is not going to hold that back. It is only going to determine whether the people working inside these systems understand what is happening and have any say in how it is designed.

What Thoughtful Deployment Could Actually Look Like

I want to be clear that I am not arguing for removing the guardrails. I am arguing for building them better.

Defined permissions instead of blanket restrictions. Role-based access that reflects what different users actually need. Clear policies about which directories an AI agent can and cannot touch, and why. Mandatory training before access, not as an afterthought. Audit trails that capture what the AI did so that someone can actually review it.

None of those things are impossible. They are just not built yet in most healthcare institutions. And they will not get built well if the only people in the room when they are designed are compliance officers and IT architects.

The professionals who are most positioned to contribute to that conversation are the ones who understand both the clinical environment and the tools. People who work in regulated, quality-driven settings where precision matters and mistakes have real consequences. People who are already thinking carefully about what these tools can and cannot do.

That is not a small group. It is just a group that is almost never asked.

A Shameless Plug, and I Mean It

If you work in healthcare IT governance, compliance, or institutional AI policy and you are building the frameworks that will govern how these tools get deployed, I would genuinely like to be part of that conversation.

I am a frontline user who has spent real time with agentic AI tools in my personal workflow. I understand what they can do, where they fail, and what questions a clinical professional is actually trying to answer when they reach for one. I would be happy to contribute that perspective to a committee, a working group, a pilot evaluation, or an informal conversation. I am not coming with an agenda. I just think the people building these guardrails would make better ones if they talked to more of us before finalizing anything.

If that sounds useful, hit reply or reach out directly.

The Standard Is Still Yours to Set

The clinical lab has always held itself to an accuracy standard that the rest of healthcare depends on. That standard exists because laboratorians fought for it, documented it, and built quality systems rigorous enough to defend it. AI governance in healthcare could learn something from that approach. Not caution for its own sake, but precision about what the risks actually are, who bears them, and what a defensible response looks like.

That kind of governance does not get built from the top down by people who have never worked a shift. It gets built by organizations smart enough to ask the right people before they finalize the rules.

See you next week.

Meredith

Meredith Hurston is a healthcare quality professional, doctoral student in AI and machine learning, and the author of Algorithmic Oversight. Lab Notes is her newsletter documenting the practical, honest side of working with AI in healthcare.