Why I Went Back to School at 49 to Get My PhD in AI

For a long time, my position on graduate school was simple: no. Absolutely not. You could not pay me to go back.

I had a career. A house. A full life. The idea of sitting in a classroom again, writing papers, jumping through academic hoops…it held zero appeal. I was done with that chapter.

And then a spreadsheet changed my mind.

The Task That Started Everything

I have worked in healthcare quality for more than 20 years. In my current role, one of my core responsibilities is classifying patient safety events, also known as adverse event reports, for the Department of Pathology. Every month, I do a retrospective review of the previous month’s events. We can get anywhere from 100 to 180 reports in a given month.

If you have never done this kind of work, let me paint the picture. You read each event. You figure out what type of incident it was. You make sure it gets routed to the right person for investigation and follow-up. You are essentially the traffic controller for everything that went wrong in the lab that month.

It is important work. It is also, I will be honest, extremely tedious.

The emotional weight is real. Reading hundreds of these reports month after month, you start to recognize patterns. The same types of mistakes. The same contributing factors. The same categories of failure. That repetition builds a quiet cynicism that is hard to shake.

There is a concept called the “third victim” in patient safety, which refers to the healthcare workers who carry the secondary trauma from being involved in or investigating adverse events. When your job is to read detailed accounts of what went wrong and why, you absorb some of that weight. It accumulates.

And on top of the emotional load, there is a significant cognitive one. Classifying these events is not always straightforward. Two people reading the same report can reasonably land on different primary categories. The process is inherently subjective, which means every single classification decision requires judgment. Making hundreds of those judgment calls every month is exhausting.

This is exactly where I started to see the potential for a machine to help. Not to replace the human review, but to do the first pass. To offer a classification that the reviewer can agree with or override. To take that initial cognitive load off the person doing the reading and introduce some consistency into a process that currently varies depending on who is reviewing it.

The system we used had machine learning built into it. But it was not doing what I needed. Instead of assigning one primary classification, it would tag multiple labels per event. A single event could have 5+ label tags assigned to it. For the kind of analysis I needed to do, that was not workable. Additional secondary tags are fine. What I needed was a clear primary class for each event.

After about 11 years of doing this manually, I hit a wall. I thought: there has to be a better way.

The Book That Changed Everything

I started Googling. Then I ended up on YouTube. I was looking for anything that could help me assign a single classification to narrative text.

At some point I came across a book called Automate the Boring Stuff with Python. The title alone grabbed me. That is exactly what I wanted to do. Automate the boring stuff. So I bought it.

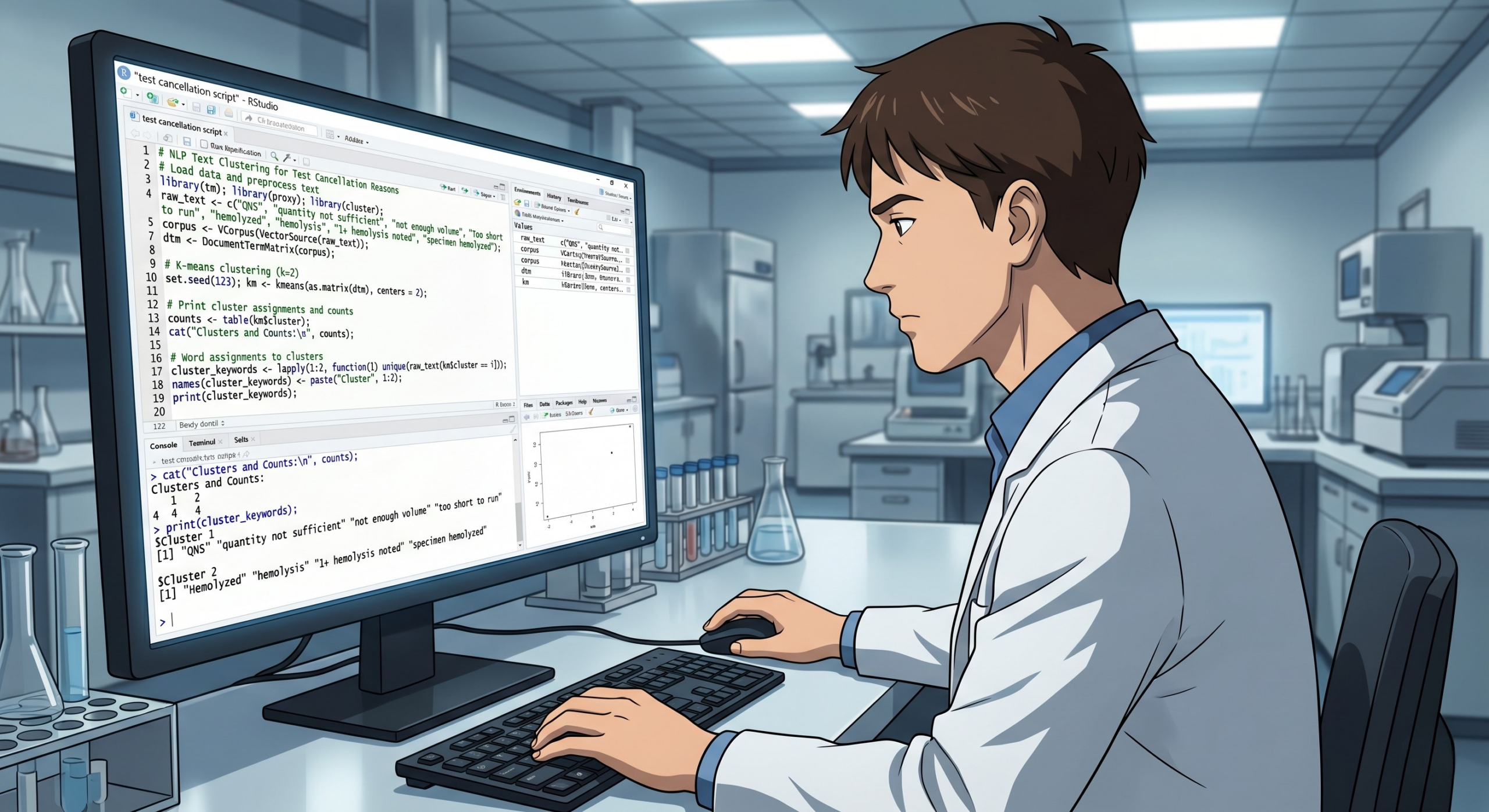

That was my introduction to Python. I had no coding background. The only programming language I had ever tried was R, and that did not go well. Python felt more approachable. I kept digging.

Eventually, I found a YouTube tutorial that was doing almost exactly what I needed. It was not using patient safety event data, but the task was the same: take narrative text, assign it a single label. I could transfer that to my project.

So I trained my first machine learning model. I used my existing patient safety events as training data, cleaned it up as best I could, and fine-tuned a classifier to recognize new events.

It came back at 50% accuracy.

That is not good, objectively. A coin flip is 50%. But I was not disappointed. I was thrilled. Because it worked. The model ran. It produced classifications. Proof of concept: confirmed. I knew I could improve it from there.

The Gap I Couldn’t Bridge Alone

Here is where I ran into a ceiling.

I knew I had something. A working prototype, a real problem it could solve, and genuine motivation to make it better. But every time I tried to go deeper, to understand why the model was underperforming, what I could do to improve it, how the architecture actually worked, I kept hitting walls I did not have the foundation to get through.

I had never taken a formal computer science or machine learning course. I had built this thing through tutorials and trial and error, and that got me to 50%. Getting past 50% was going to require something more.

I also started thinking bigger. If I was going to build this into something real, something I could take to other organizations or consult on, I needed the credentials to back it up. Not because I doubted myself, but because the people I would be working with would need a reason to trust me and the work I’d done.

How I Found the Black in AI PhD Prep Program

I was not actively looking for a PhD program. I want to be clear about that. I was a 48-year-old woman who had firmly decided that school was behind her.

But I came across Black in AI and their PhD preparation program, which helps students get admitted into doctoral programs focused on AI and machine learning. I figured I would check it out, see what was out there.

I got accepted. And as part of the program, we had to research PhD programs and identify ones that matched our goals and circumstances.

Here is where being a non-traditional student became the central factor.

I am a homeowner. I have a full-time job. I was not in a position to quit and become a full-time graduate student for five years. The standard PhD pipeline, where you get a stipend, work in a research lab, and spend years as a graduate research assistant, was not a realistic option for my life.

I needed something built for someone like me.

Choosing the Right Program

I looked at three programs seriously.

One did not have formal coursework at all. You were essentially admitted and then expected to write your dissertation. That might work for someone who already has a deep technical foundation. But I needed the structure. I needed to build the fundamentals from the ground up, not walk in and be handed a blank page.

Another was a strong program but more expensive and required attendance in Saturday classes for the duration of the degree. Every Saturday committed until I was done. Given my schedule and responsibilities, that was not sustainable.

Walsh College checked every box. Evening and asynchronous or remote classes. A real coursework requirement that builds the foundation I needed. A dissertation. And a format that could work alongside a full-time career. That is where I enrolled.

What the PhD Is Actually Giving Me

I am learning things I did not know. Model architecture. Fine-tuning strategies. Loss functions. Learning rates, softmax, and regularization. These are the mechanics underneath the tools I had been using on instinct.

It is also giving me the foundation to have different conversations. When I sit down with a hospital administrator or a department head and say that AI can help with this workflow, I need to be able to explain why, what the limitations are, and what responsible implementation actually looks like. The PhD is building that capacity.

What AI Is Already Doing in the Lab

Here is the part I want to make sure is clear: this is not theoretical. AI is already in the clinical laboratory, and it is expanding.

Digital pathology is probably the furthest along. Both Quest Diagnostics and LabCorp have invested heavily here, and both are using the same platform. In 2024, Quest acquired PathAI Diagnostics and licensed its AISight image management system to support pathology labs and cancer diagnosis at scale. Around the same time, LabCorp expanded its PathAI partnership to roll out the AISight Dx platform across its U.S. network, effectively moving most lab workflows to digital, including the review of specimens. The goal is to eliminate the need for glass slides. Whole slide imaging plus AI overlay is not a pilot project anymore. It is a deployment.

Hematology has been building AI capability for years. CellaVision, which has been in the digital cell morphology space for a long time, now layers machine learning over traditional differential review for classification and flagging. Sysmex has digital scanning with AI-based preclassification of white blood cells. Beckman Coulter uses AI-powered image recognition for cell identification and clinical decision support. The direction across all of these platforms is the same: the analyzer does the first pass, the tech reviews and confirms. That is the same model I was trying to build for adverse events.

Microbiology is seeing meaningful AI adoption beyond traditional automation. BD Kiestra and Copan WASPLab are both using AI to read culture plates, track growth patterns, and prioritize which plates need human eyes. Copan has a system that is designed to automatically segregate positive plates and release negative ones, reducing the volume of plates a tech needs to physically review. BD’s Kiestra has similarly evaluated AI algorithms for detecting bacterial growth and managing sterile culture workflows. The result is faster turnaround and less time spent looking at plates that will never grow anything.

Autoverification is where AI has a significant near-term opportunity that most labs have not fully realized yet. Most labs already run rule-based autoverification, a result passes or gets flagged based on static parameters. The next generation is moving toward ML-driven autoverification that learns from result patterns over time. Delta checks, reflex triggers, critical value flagging…all of this is becoming AI territory. The shift from hard-coded rules to adaptive models could meaningfully reduce unnecessary holds and manual review volume.

Operations may be the least visible application, but it has high potential impact. Staffing and workload modeling based on historical volume patterns, instrument QC anomaly detection, turnaround time prediction, and resource planning are all areas where the data already exists and the analytical tools are becoming accessible. Most lab information systems are sitting on years of structured operational data that has never been modeled for anything other than compliance reporting. That is starting to change.

The Part I Did Not Expect

I thought going back to school at 49 would feel like a sacrifice. Like something I was forcing myself to do for a future payoff.

It does not feel like that at all.

It feels like the first time in a long time that I am building something. The 50%-accurate model sitting on my laptop is a proof of concept for something that could actually matter. The coursework is not abstract. It is directly connected to a problem I have been living with for 16 years.

I said I would never go back to school. I meant it at the time.

But I had not yet found the problem worth going back for.

I Want to Hear From You

If you work in the lab and you have ever looked at some part of your workflow and thought “there has to be a better way to get through all of this.” You know the feeling. The copy-pasting, the manual lookups, the tasks that eat up your time without actually using your brain, that thought is worth following. That is where I started. A book title caught my attention because it named exactly what I was tired of. And it changed everything.

Are you thinking about a transition like this? Have you run into a workflow problem that you suspect a computer could help solve? Send me an email and tell me what you are seeing. I read each one.

See you next week.

Meredith

Meredith Hurston is a healthcare quality professional, doctoral student in AI and machine learning, and the author of Algorithmic Oversight. Lab Notes is her newsletter documenting the practical, honest side of working with AI in healthcare.